AI in Healthcare Compliance: Building HIPAA-Compliant Intelligent Patient Care Systems

- Introduction

- Why HIPAA Compliance Matters in Healthcare AI?

- Key Regulations in AI in Healthcare Compliance

- How HIPAA-Compliant AI Systems Work and Are Built?

- Key Challenges in Healthcare AI Implementation

- How MultiQoS Builds Secure AI Systems for Healthcare

- Conclusion

- FAQs

Summary

Healthcare AI is becoming part of everyday hospital systems now. It’s used for diagnosis, tracking patients, and handling medical records, but none of that really matters if patient data isn’t protected properly under HIPAA.

The bigger issue is that healthcare data doesn’t stay in one place. It moves between hospital systems, cloud tools, and EHR platforms, and every step adds risk if security isn’t already built in.

On top of that, most hospitals are still running on old systems, while also trying to meet strict compliance rules and trust AI decisions in real situations. That mix makes things harder than they look on paper.

At the end of the day, healthcare AI only works when it actually fits into real hospital workflows and keeps patient data safe at every step.

Introduction

Healthcare teams are increasingly relying on AI in healthcare to support their daily work.

Doctors use it to review cases faster, monitor changing patient conditions, and respond quickly when something doesn’t look right.

As hospitals adopt these systems, AI in healthcare compliance has become a serious focus. Large volumes of sensitive patient data flow through these systems, so teams must handle everything carefully and ensure strong security at every step.

To build such secure and scalable solutions, organizations often depend on healthcare software development services for proper system design and integration.

That’s where things get tricky. Data breaches in healthcare are expensive—on average, they cost around $7.42 million per incident. For organizations using AI, this makes security a real concern rather than just a checkbox. It’s no longer only about improving performance or saving time.

Organizations must safeguard patient data at every step.

This blog explains how healthcare teams can build AI systems that are secure and reliable. It also shows how following compliance standards helps protect patient information while improving care delivery.

Why HIPAA Compliance Matters in Healthcare AI?

HIPAA sets clear rules for healthcare teams on how patient data should be handled, including medical reports, records, and any information that can identify a patient. Now with AI in healthcare becoming part of daily hospital work, a lot more of this sensitive data flows through different systems, so teams can’t afford to be careless anymore. This is also why healthcare app development must follow HIPAA standards from the design stage itself.

Hospitals use AI to make work faster and help doctors with decisions. But as this use grows, things also get riskier. Even a tiny mistake in the system can expose data, and that directly affects the safety of the patients.

If the rules of HIPAA are ignored, it is not about fines or legal issues. The bigger problem is trust. Once patients feel their data isn’t safe, they stop being open with doctors, and that creates real problems in treatment. Most patients only share full details when they feel confident about privacy. If that confidence drops, they naturally hold back information, which affects how care decisions are made.

At this point, following HIPAA compliance in AI isn’t optional anymore. Many healthcare teams now rely on AI-based compliance tools to manage systems and protect patient data more effectively.

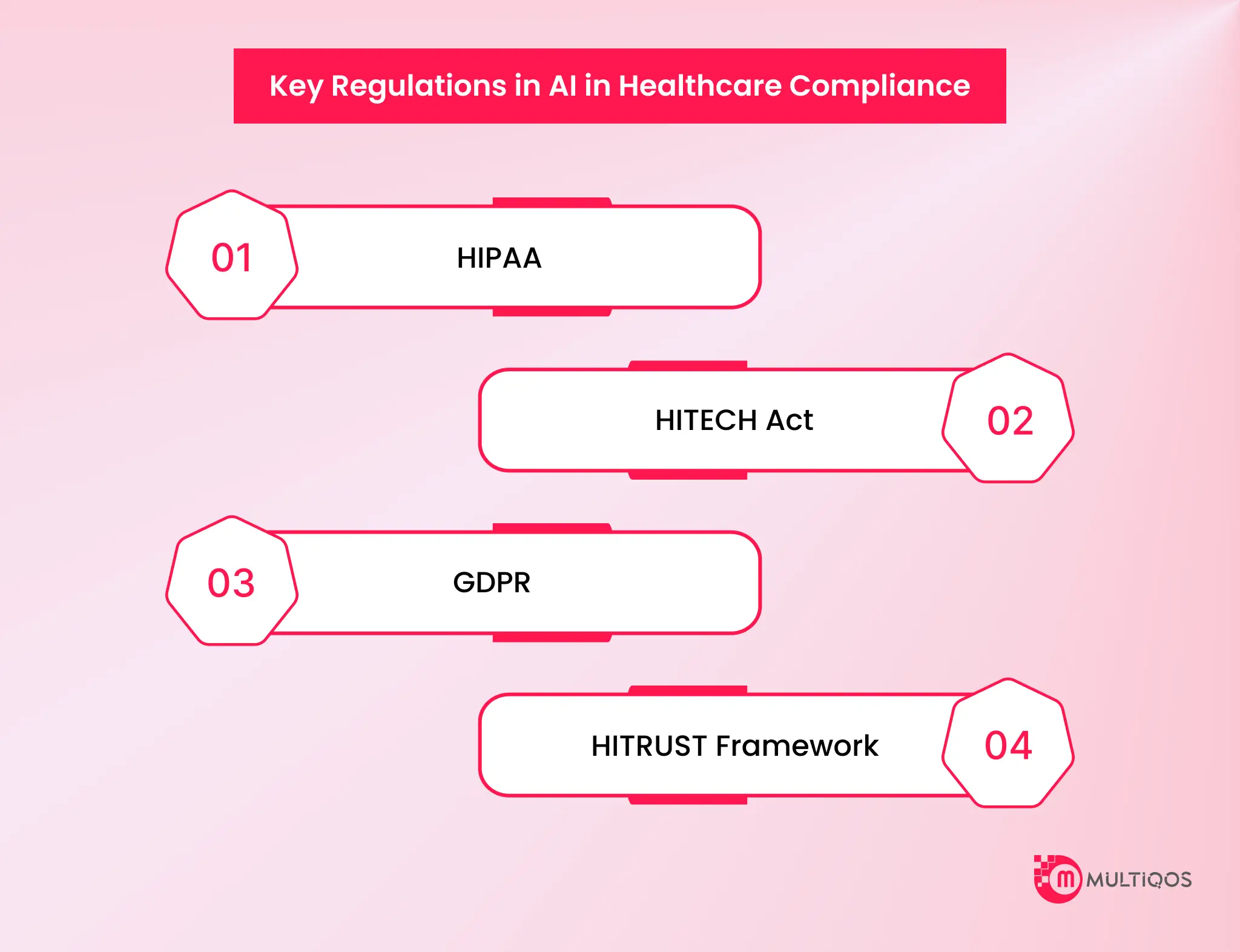

Key Regulations in AI in Healthcare Compliance

Healthcare AI systems don’t run on their own rules. Hospitals and tech teams follow strict regulations to keep patient data safe. This ensures everything works smoothly in real healthcare environments.

HIPAA

HIPAA is the main rule for handling patient health data. It makes sure sensitive information is not misused or exposed. Hospitals use encryption, access control, and audit logs to protect data.

HITECH Act

HITECH builds on HIPAA. It pushes healthcare systems toward digital records. It also makes data breach reporting stricter. This ensures security issues are handled on time.

GDPR

GDPR focuses on personal data privacy and control. It allows individuals to access their data. They can also update or request deletion when needed.

HITRUST Framework

HITRUST brings multiple security standards into one structure. It combines HIPAA, ISO, and NIST guidelines. This helps healthcare teams manage compliance in a more organized way.

These regulations don’t work separately. In real healthcare systems, they directly shape how AI tools are designed. They also influence how data moves and how securely systems operate in daily hospital workflows.

Together, these frameworks define how AI in healthcare compliance systems handle patient data securely across regions and platforms.

Now, let’s look at HIPAA in more detail and understand how it actually works inside healthcare AI systems.

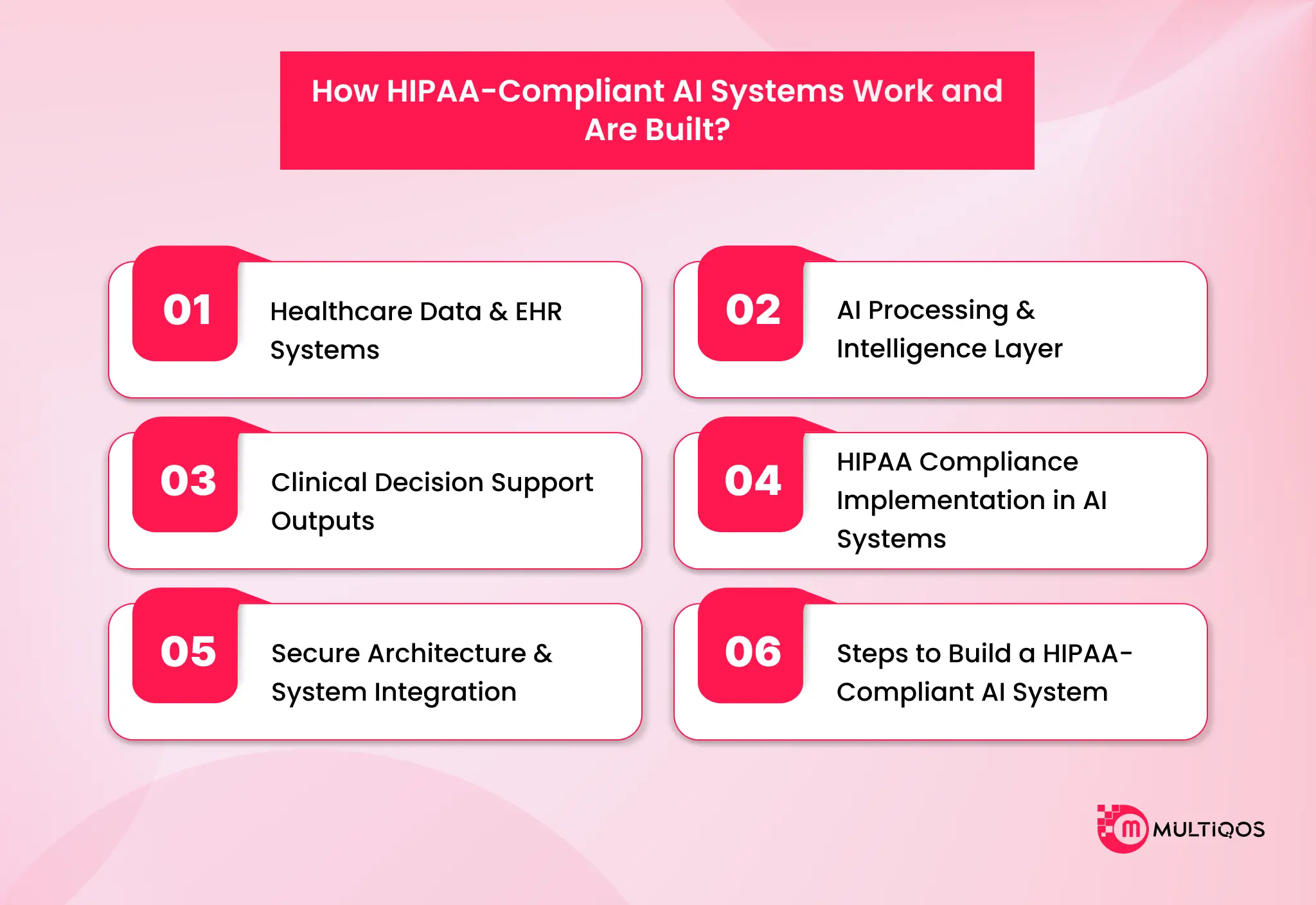

How HIPAA-Compliant AI Systems Work and Are Built?

This part explains HIPAA-compliant AI healthcare systems used in hospitals. It shows how teams build these systems from the very beginning. It also explains how teams handle patient information, how AI uses this data, and how they keep everything safe and secure.

Healthcare Data & EHR Systems

Healthcare data exists across hospitals, labs, medical devices, and external platforms.

In modern AI in healthcare compliance systems, managing this distributed data securely is one of the biggest challenges. IBM says nearly 80% of healthcare data is unstructured, which makes it difficult to use. EHR systems have all patient records, such as prescriptions, reports, and clinical notes. But people don’t enter this data in the same way. Some doctors write long notes, some keep them short. Even simple values like blood pressure appear in different formats. So teams spend time fixing this data. They clean it, sort it, and bring it into one format before using it in AI systems. Without this step, the system will not give reliable results.

AI Processing & Intelligence Layer

AI starts working only after the data becomes usable. Most of the effort happens before the model runs. McKinsey & Company says better data leads to better outcomes, which is why this step matters so much. Teams first fix errors in the data. They remove duplicates, fill missing values, and align formats. Then, models study patient history and look for patterns over time. Clinical notes carry a lot of useful information, even though they don’t follow a fixed structure. NLP tools read these notes and pull out details such as symptoms, diagnoses, and treatments. At this point, the system only shows patterns—it does not make decisions. Many also adopt AI agent development to automate workflows and improve decision-making.

Clinical Decision Support Outputs

AI shows up in hospitals in simple ways. It does not replace doctors. It helps them respond faster. Studies show these systems improve efficiency by around 15–20%.

The system alerts doctors about abnormal vitals, possible drug reactions, or patients who need attention. It shows this information through alerts and dashboards.

Doctors read these insights and act on them. The final decision always stays with the doctor.

HIPAA Compliance Implementation in AI Systems

Teams build security into the system from day one. IBM reports that healthcare data breaches cost about $10.93 million on average. Encryption protects data when it moves or is stored. Access control limits who can see patient data. Many systems also use multi-factor authentication for extra safety. The system tracks every action through audit logs. This helps during checks and investigations. While training AI models, teams remove patient identity details so the system learns patterns without exposing individuals.

Secure Architecture & System Integration

Most hospitals now use cloud or hybrid systems. Even then, connecting systems is not easy. AI systems connect to hospital software using APIs. This allows data to move safely between systems. Cloud setups give flexibility. On-premise setups give more control. Many hospitals use both. Every system stores data differently. So teams build translation layers to make the data consistent. Without this, AI systems do not work properly in real hospital settings.

Steps to Build a HIPAA-Compliant AI System

Everything usually starts with one thing—what problem is being solved. That decision shapes everything else. Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data.

After that, secure data handling is set up first. Collection, storage, and access rules are defined early because fixing them later is complex.

Then, AI models are built and trained in controlled environments where data stays protected. Security features like encryption, access control, and logging are built into the system, not added later.

Before deployment, systems go through compliance checks and risk testing. Not just whether it works, but how it behaves under failure or stress.

After deployment, monitoring continues. Logs, system behaviour, performance drift—all of it is tracked. These systems don’t really reach a final stage. They just stay stable and maintained.

Key Challenges in Healthcare AI Implementation

Healthcare AI is not simple to bring into real hospital systems. Healthcare data security is always a concern because patient information moves across many systems and needs protection at every step. Accuracy is another issue. When the data is incomplete or inconsistent, the output also becomes unreliable, which is a real risk in patient care. Integration is often messy in practice. Many hospitals still depend on older systems that don’t connect easily with modern AI tools, so data sharing and system flow don’t work smoothly.

Cost is another major factor. These systems need strong infrastructure, regular updates, and continuous monitoring to run properly. Strict healthcare regulations also guide how data is used. Healthcare rules are also strict about how data is handled. That can slow things down a bit. But it’s necessary to stay aligned with AI in healthcare compliance and keep medical data safe.

How MultiQoS Builds Secure AI Systems for Healthcare

MultiQoS works as a healthcare tech partner that builds AI systems for real hospital use. The focus stays on making AI fit into daily clinical work instead of forcing doctors or staff to change how they already operate. Each solution is built based on the hospital’s actual workflow. There is no fixed setup. The system is shaped around how care teams handle patients, record data, and make decisions.

Security is handled from the start. Data is protected using encryption and access control. HIPAA-compliant practices are applied across storage, transfer, and processing. Patient information stays restricted and traceable at every step. Regular checks and safeguards are also added to reduce risk.

The systems are designed to scale as data grows. They can manage large patient volumes without performance issues. They also work across cloud or on-premise hospital environments, depending on the setup and infrastructure needs. Integration with EHR and EMR systems is also a key part. Data flows between hospital software and the AI system smoothly. It does not break existing processes or create manual work. This helps teams use AI without changing their daily routine.

MultiQoS also covers the full lifecycle. This includes development, deployment, and ongoing support. The system keeps running in real hospital conditions without constant disruption. Updates and improvements are handled over time, so the system stays stable and usable. MultiQoS also offers end-to-end AI development services for healthcare.

Conclusion

AI in healthcare compliance is already part of daily hospital work. It helps doctors review cases faster, keeps patient tracking more organized, and reduces routine workload for staff. But it only works well when it’s built into real systems, not added later as an extra layer.

At the same time, patient records need constant protection. It moves between different tools, so it must stay secure throughout. If that part is overlooked, even a strong system can start creating issues.

In practice, things work better when AI and compliance operate together. AI helps teams move more quickly and spot problems early, while compliance keeps things steady and the data safe. Healthcare is already going this way, but it really comes down to how carefully everything is set up and handled over time.

FAQs

HIPAA is a set of rules for healthcare teams. These rules ensure patient information stays private and secure when AI systems use or share it. Patient data is protected. Healthcare teams follow HIPAA rules. AI systems must also follow these rules.

In simple terms, AI helps reduce the load on doctors and staff. It goes through large amounts of patient data, pulls out what matters, and sometimes even highlights things that may need attention sooner than usual. It’s more of a support layer than a replacement.

It usually comes down to getting the basics right—strong encryption, limited access, and clear control over who can see what. Most systems also keep logs, so there’s a record of how data is being used across the pipeline.

Some risks of using AI in healthcare include data leaks, incorrect information, and biased models. If not properly addressed, these issues can affect care and outcomes.

Most rely on a mix of secure infrastructure and strict internal rules. That includes encrypted storage, role-based access control, and regular audits. It’s not a one-time setup—it needs constant monitoring in practice.

Get In Touch